April 26, 2023 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

A power-efficient engine that can disentangle the visual attributes of objects

Most humans are innately able to identify the individual attributes of sensory stimuli, such as objects they are seeing, sounds they are hearing, and so on. While artificial intelligence (AI) tools have become increasingly better at recognizing specific objects in images or other stimuli, they are often unable to disentangle their individual attributes (e.g., their color, size, etc.).

Researchers at IBM Research Zürich and ETH Zürich recently developed a computationally efficient engine that unscrambles the separate attributes of a visual stimulus. This engine, introduced in a paper in Nature Nanotechnology, could ultimately help to tackle complex real-world problems faster and more efficiently.

"We have been working on developing the concept of neuro-vector-symbolic architecture (NVSA), which offers both perception and reasoning capabilities in a single framework," Abbas Rahimi, one of the researchers who carried out the study, told Tech Xplore. "In a NVSA, visual perception is partly done by a deep net in which the resulting representations are entangled in complex ways, which may be suitable for a specific task but make it difficult to generalize to slightly different situations. For instance, an entangled representation of a 'red small triangle' cannot reveal the conceptual attributes of the object such as its color, size, type, etc."

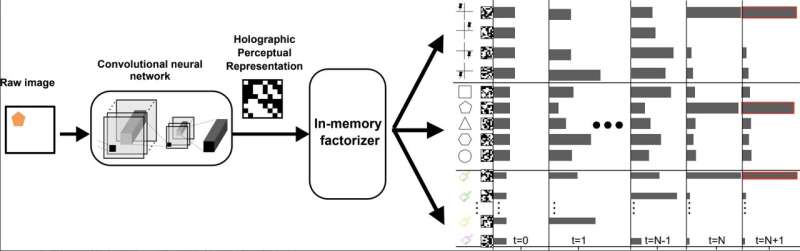

To overcome the limitations of existing NVSA architectures, Rahimi and his colleagues set out to create a new engine that could disentangle the visual representations of objects in images, representing their attributes separately. This engine, dubbed "in-memory factorizer," is based on a variant of recurrent neural networks called resonator networks, which can iteratively solve a special form of factorization problem.

"In this elegant mathematical form, the attribute values and their entanglements are represented via high-dimensional holographic vectors," Rahimi explained. "They are holographic because the encoded information is equally distributed over all the components of the vector. In the resonator networks, these holographic representations are iteratively manipulated by the operators of vector-symbolic architectures to solve the factorization problem."

A resonator network is a type of recurrent neural network designed to solve factorization problems (i.e., problems that entail splitting one whole number into smaller factors that, when multiplied, produce the original number). While resonator networks have proved to be effective for tackling many simple factorization problems, they typically suffer from two key limitations.

First, these networks are vulnerable to entering an "infinite loop of search," a phenomenon known as limit cycles. In addition, resonator networks are often unable to rapidly and efficiently solve large problems that involve several factors. The engine created by Rahimi and his colleagues addresses and overcomes both of these limitations.

"Our in-memory factorizer enriches the resonator networks on analog in-memory computing (AIMC) hardware by naturally harnessing intrinsic memristive device noise to prevent the resonator networks from getting stuck in the limit cycles," Rahimi said. "This intrinsic device stochasticity, based on a phase-change material, upgrades a deterministic resonator network to a stochastic resonator network by permitting noisy computations to be performed in every single iteration. Furthermore, our in-memory factorizer introduces threshold-based nonlinear activations and convergence detection."

As part of their recent study, the researchers created a prototype of their engine using two in-memory computing chips. These chips were based on phase-change memristive devices, promising systems that can both perform computations and store information.

"The experimental AIMC hardware setup contains two phase-change memory cores, each with 256 × 256 unit cells to perform matrix-vector multiplication (MVM) and transposed MVM with a high degree of parallelism and in constant time," Rahimi said. "In effect, our in-memory factorizer reduces the computational time complexity of vector factorization to merely the number of iterations."

In initial evaluations, the in-memory factorizer created by Rahimi and his colleagues achieved highly promising results, solving problems at least five times larger and more complex than those that could be solved using a conventional system based on resonator networks. Remarkably, their engine can also solve these problems faster and consuming less computational power.

"A breakthrough finding of our study is that the inevitable stochasticity presented in AIMC hardware is not a curse but a blessing," Rahimi said. "When coupled with thresholding techniques, this stochasticity could pave the way for solving at least five orders of magnitude larger combinatorial problems which were previously unsolvable within the given constraints."

In the future, the new factorization engine created by this team of researchers could be used to reliably disentangle the individual visual attributes of objects in images captured by cameras or other sensors. It could also be applied to other complex factorization problems, opening exciting new avenues in AI research.

"The massive number of computationally expensive MVM operations involved during factorization is accelerated by our in-memory factorizer," Rahimi added. "Next, we are looking to design and test large scale AIMC hardware capable of performing larger MVMs."

More information: Jovin Langenegger et al, In-memory factorization of holographic perceptual representations, Nature Nanotechnology (2023). DOI: 10.1038/s41565-023-01357-8

© 2023 Science X Network