April 8, 2024 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

A fusion SLAM system that enhances the sensing and localization capabilities of biped climbing robots

Climbing robots could have many valuable real-world applications, ranging from the completion of maintenance tasks on roofs or other tall structures to the delivery of parcels or survival kits in locations that are difficult to access. To be successfully deployed in real-world settings, however, these robots should be able to effectively sense and map their surroundings, while also accurately predicting where they are located within mapped environments.

Researchers at Guangdong University of Technology recently developed a new method to enhance the ability of a bipedal climbing robot to estimate its state and map its surroundings while climbing a truss (i.e., a triangular system consisting of straight interconnected elements, which could be a bridge, roof or another man-built structure). Their proposed method, introduced in Robotics and Autonomous Systems, is based on a simultaneous localization and mapping (SLAM) algorithm.

"Our recent work deploys SLAM methods to a particular biped climbing robot (BiCR), which was developed by our lab, named the Biomimetic and Intelligent Robotics Lab," Weinan Chen, co-author of the paper, told Tech Xplore.

"BiCR is an electromechanical system similar to a moving manipulator that is able to move via grippers at both ends and rotate with multiple joints. The robot can be used for installation, maintenance, and inspection in high-altitude and high-risk environments, such as construction site scaffolding and power towers."

The primary objective of the recent study by Chen and his colleagues was to allow a bipedal climbing robot to autonomously localize itself and create a map of surroundings while navigating environments characterized by truss structures. The SLAM-based approach they propose was specifically applied to BiCR, a bipedal climbing robot previously developed at their lab.

"Since there are many differences in the configurations and working environments of the BiCR and other robots (ground vehicles, UAVs, etc.), this paper proposes a method that fuses robot joint information and environmental information to improve the localization accuracy of the BiCR," Chen said.

BiCR-SLAM, the simultaneous localization and mapping system developed by the researchers, uses information about the BiCR robot's configuration, a LiDAR sensing system and visual data collected by cameras to localize a robot and map the truss it is climbing. The framework can determine the pose of the robotic gripper and create a map of poles surrounding the gripper, so that it can better plan its actions while climbing a truss.

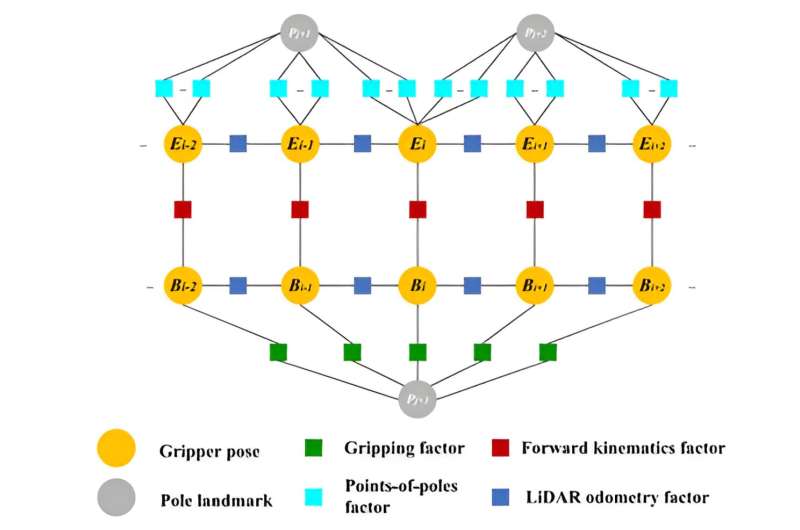

"The framework consists of four components: an encoder dead reckoning, a LiDAR odometry estimation tool, a pole landmark mapping model, and a global optimization technique," Jianhong Xu, another author of the paper, said. "In the global optimization, we propose a multi-source fusion factor graph to jointly optimize the robot localization and pole landmarks."

A notable advantage of BiCR-SLAM is that it simultaneously considers information related to the bipedal robot's joints and data collected by sensors. It thus allows the BiCR robot to map its surroundings and predict its pose, using this information to plan its next moves and safely climb a truss.

"The system can also keep working in some low-texture and single-structure truss environments," Chen said. "To the best of our knowledge, BiCR-SLAM is the first SLAM system solution to incorporate BiCR's information for a truss map. This work can advance the development of BiCR and SLAM and improve BiCR's localization and navigation performance in autonomous operations."

While Chen and his collaborators specifically designed their SLAM method for the BiCR robot, in the future it could also be adapted and applied to other climbing robots. So far, the team used a single LiDAR system to sense poles within a small sensing range around a robot, but they soon hope to further advance its capabilities using more of these systems along with deep learning techniques.

"In our next studies, we plan to use multiple LiDARs to sense pole objects using deep learning approaches that are free from the calibration of the external parameters between various sensors," Chen added.

"We also plan to use the sensing configuration with a more extensive scanning range to improve segmentation accuracy and apply this work to the autonomous navigation of climbing robots. Specifically, we will integrate the motion planning part to realize an autonomous navigation function."

More information: BiCR-SLAM: A multi-source fusion SLAM system for biped climbing robots in truss environments. Robotics and Autonomous Systems(2024). DOI: 10.1016/j.robot.2024.104685.

© 2024 Science X Network