February 6, 2024 feature

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

A deep reinforcement learning approach to enhance autonomous robotic grasping and assembly

Semi-autonomous and autonomous robots are being introduced in a growing number of real-world environments, including industrial settings. Industrial robots could speed up the manufacturing of various products by assisting human workers with basic tasks and lightening their workload.

Two of the most crucial tasks in manufacturing are object grasping and product assembly, yet reliably tackling these tasks using robotic systems can be challenging. One of the primary limitations of industrial robots for automated assembly chains is that they need to be extensively programmed to tackle specific tasks (e.g., grasping and assembling specific items), and their product-specific programming can take time.

Researchers at Qingdao University of Technology recently set out to tackle this crucial limitation of industrial robots using deep reinforcement learning. Their paper, published in The International Journal of Advanced Manufacturing Technology, introduces new deep learning algorithms that could speed up the time required to train industrial robots on new grasping and assembly tasks.

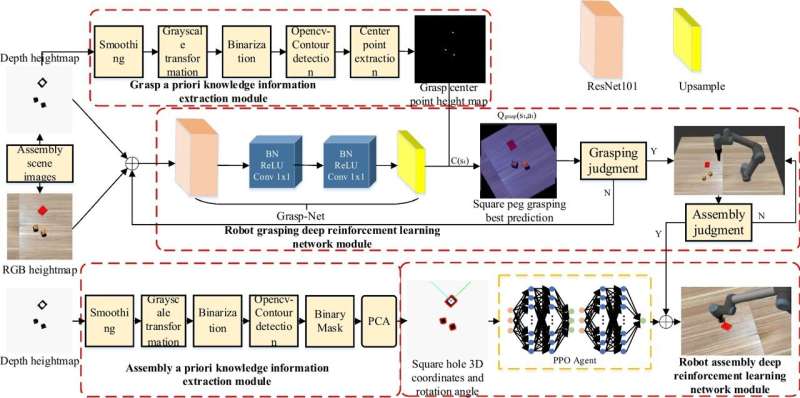

"This paper proposes a deep reinforcement learning-based framework for robot autonomous grasping and assembly skill learning," Chengjun Chen, Hao Zhang and their colleagues wrote in their paper.

"Meanwhile, a deep Q-learning-based robot grasping skill learning algorithm and a PPO-based robot assembly skill learning algorithm are presented, where a priori knowledge information is introduced to optimize the grasping action and reduce the training time and interaction data needed by the assembly strategy learning algorithm."

The new techniques for robot training introduced in this recent paper build on computer vision and machine learning tools introduced in recent years. First, the researchers developed a deep learning algorithm designed to rapidly teach robots new object grasping skills, as well as a separate algorithm to train robots to assemble specific objects.

Concurrently, they also designed reward functions that can be used to effectively assess the grasping and assembly skills of industrial robotic systems. These include both grasping and assembly constraint reward functions.

To assess the potential of their proposed robot training toolbox, Chen, Zhang and their colleagues tested it in both simulations and on physical industrial robots. In their real-world experiments, the team specifically used UR5, a lightweight robotic arm often applied to industrial tasks, along with a RealSense D435i camera to collect RGB images of objects, which their algorithms could then analyze.

"The effectiveness of the proposed framework and algorithms was verified in both simulated and real environments, and the average success rate of grasping in both environments was up to 90%. Under a peg-in-hole assembly tolerance of 3 mm, the assembly success rate was 86.7% and 73.3% in the simulated environment and the physical environment, respectively," the researchers wrote in their paper.

The initial results collected by Chen, Zhang and their collaborators are very promising, suggesting that their training algorithm toolkit could speed up the programming of industrial robots, rapidly teaching them to reliably grasp and assemble objects. In their next studies, the researchers plan to further improve their approach and continue testing it on common grasping and assembly tasks.

"In future work, we will improve the hole detection accuracy and domain randomization of the shape and image of the holes in the virtual environment, optimize the strategy from the simulation environment to the physical environment, and reduce errors in both stages to improve the assembly success rate of in the physical environment," the researchers concluded.

More information: Chengjun Chen et al, Robot autonomous grasping and assembly skill learning based on deep reinforcement learning, The International Journal of Advanced Manufacturing Technology (2024). DOI: 10.1007/s00170-024-13004-0

© 2024 Science X Network