April 11, 2024 report

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Tiny AI-trained robots demonstrate remarkable soccer skills

A team of AI specialists at Google's DeepMind has used machine learning to teach tiny robots to play soccer. They describe the process for developing the robots in Science Robotics.

As machine-learning-based LLMs make their way into the public domain, computer engineers continue to look for other applications for AI tools. One purpose that has long captured the imagination of scientists and the public at large is the implementation of robots that can carry out traditionally human tasks that are difficult or arduous.

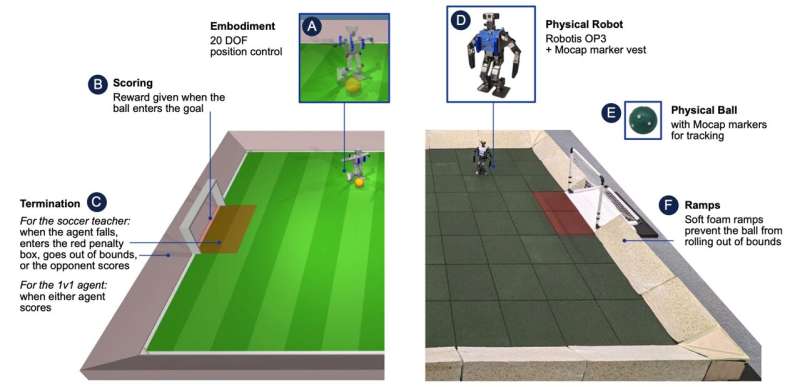

The basic design for most such robots has typically involved using a direct programming or mimicking approach. In this new effort, the research team in the U.K. has applied machine learning to the process and has created tiny robots (approximately 510 mm tall) that are remarkably good at playing soccer.

The process of creating the robots involved developing and training two main reinforcement learning skills in computer simulations—getting up off the ground after falling, for example, or attempting to kick a goal. They then trained the system to play a full, one-on-one version of soccer by training it with a massive amount of video and other data.

Once the virtual robots could play as desired, the system was transferred to several Robotis OP3 robots. The team also added software that allowed the robots to learn and improve as they first tested out individual skills and then when they were placed on a small soccer field and asked to play a match against one another.

In watching their robots play, the research team noted that many of the moves they made were accomplished more smoothly than robots trained using standard techniques. They could get up off the pitch much faster and more elegantly, for example.

The robots also learned to use techniques such as faking a turn to push their opponent into overcompensating, giving them a path toward the goal area. The researchers claim that their AI robots played considerably better than robots trained with any other technique to date.

More information: Tuomas Haarnoja et al, Learning agile soccer skills for a bipedal robot with deep reinforcement learning, Science Robotics (2024). DOI: 10.1126/scirobotics.adi8022

© 2024 Science X Network