Artificial intelligence system could help counter the spread of disinformation

Disinformation campaigns are not new—think of wartime propaganda used to sway public opinion against an enemy. What is new, however, is the use of the internet and social media to spread these campaigns. The spread of disinformation via social media has the power to change elections, strengthen conspiracy theories, and sow discord.

Steven Smith, a staff member from MIT Lincoln Laboratory's Artificial Intelligence Software Architectures and Algorithms Group, is part of a team that set out to better understand these campaigns by launching the Reconnaissance of Influence Operations (RIO) program. Their goal was to create a system that would automatically detect disinformation narratives as well as those individuals who are spreading the narratives within social media networks. Earlier this year, the team published a paper on their work in the Proceedings of the National Academy of Sciences and they received an R&D 100 award last fall.

The project originated in 2014 when Smith and colleagues were studying how malicious groups could exploit social media. They noticed increased and unusual activity in social media data from accounts that had the appearance of pushing pro-Russian narratives.

"We were kind of scratching our heads," Smith says of the data. So the team applied for internal funding through the laboratory's Technology Office and launched the program in order to study whether similar techniques would be used in the 2017 French elections.

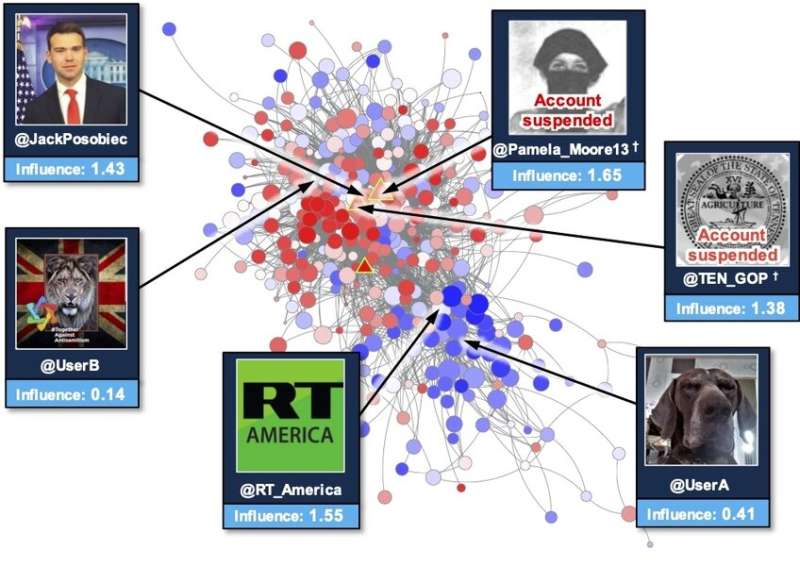

In the 30 days leading up to the election, the RIO team collected real-time social media data to search for and analyze the spread of disinformation. In total, they compiled 28 million Twitter posts from 1 million accounts. Then, using the RIO system, they were able to detect disinformation accounts with 96 percent precision.

What makes the RIO system unique is that it combines multiple analytics techniques in order to create a comprehensive view of where and how the disinformation narratives are spreading.

"If you are trying to answer the question of who is influential on a social network, traditionally, people look at activity counts," says Edward Kao, who is another member of the research team. On Twitter, for example, analysts would consider the number of tweets and retweets. "What we found is that in many cases this is not sufficient. It doesn't actually tell you the impact of the accounts on the social network."

As part of Kao's Ph.D. work in the laboratory's Lincoln Scholars program, a tuition fellowship program, he developed a statistical approach—now used in RIO—to help determine not only whether a social media account is spreading disinformation but also how much the account causes the network as a whole to change and amplify the message.

Erika Mackin, another research team member, also applied a new machine learning approach that helps RIO to classify these accounts by looking into data related to behaviors such as whether the account interacts with foreign media and what languages it uses. This approach allows RIO to detect hostile accounts that are active in diverse campaigns, ranging from the 2017 French presidential elections to the spread of COVID-19 disinformation.

Another unique aspect of RIO is that it can detect and quantify the impact of accounts operated by both bots and humans, whereas most automated systems in use today detect bots only. RIO also has the ability to help those using the system to forecast how different countermeasures might halt the spread of a particular disinformation campaign.

The team envisions RIO being used by both government and industry as well as beyond social media and in the realm of traditional media such as newspapers and television. Currently, they are working with West Point student Joseph Schlessinger, who is also a graduate student at MIT and a military fellow at Lincoln Laboratory, to understand how narratives spread across European media outlets. A new follow-on program is also underway to dive into the cognitive aspects of influence operations and how individual attitudes and behaviors are affected by disinformation.

"Defending against disinformation is not only a matter of national security, but also about protecting democracy," says Kao.

More information: Steven T. Smith et al, Automatic detection of influential actors in disinformation networks, Proceedings of the National Academy of Sciences (2021). DOI: 10.1073/pnas.2011216118

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.