March 15, 2022 feature

An approach to rapidly and efficiently improve the locomotion of legged robots

In recent years, roboticists have developed mobile robots with a wide range of anatomies and capabilities. A class of robotic systems that has been found to be particularly promising for navigating unstructured and dynamic environments are legged robots (i.e., robots with two or more legs that often resemble animals).

While legged robots are very promising systems, reliably controlling their movements, or locomotion, can be challenging. While some teams have previously created locomotion controllers manually, others tried to program them automatically, using machine learning algorithms. Automatically designing them can be advantageous, yet it typically it entails training machine learning algorithms for long periods of time.

Mathias Thor and Poramate Manoonpong, two researchers at the University of Southern Denmark's Mærsk Mc-Kinney Møller Institute, have recently developed an alternative approach to train controllers for the locomotion of legged robots. This approach, presented in a paper published in Nature Machine Intelligence, can be used to attain locomotion behaviors of varying complexity within short periods of time.

"Our paper was based on previous work of mine, where I used central pattern generators (CPGs) for locomotion control of legged robots," Mathias Thor, one of the researchers who carried out the study, told TechXplore. "The primary objective of this new study was to show that a locomotion controller can be simple and comprehensible yet capable of generating complex locomotion behaviors."

The new and flexible controller developed by Thor and Manoonpong is based on a bio-inspired, artificial central pattern generator (CPG) and a premotor neural network. GPGs are biological neural circuits that allow animals to innately move in rhythmic patterns, resulting in behaviors such as breathing, walking, flying, and swimming. Many computer scientists have recently been trying to replicate these biological systems in machines, to enable different types of robot locomotion.

"The CPG generates a rhythmic signal for the motors to follow, and the premotor neural network reshapes the CPG output for high performance," Thor explained. "The reshaping is based on the robot morphology and sensory feedback. The key advantages of our control approach are that it learns quickly and is comprehensible and modular."

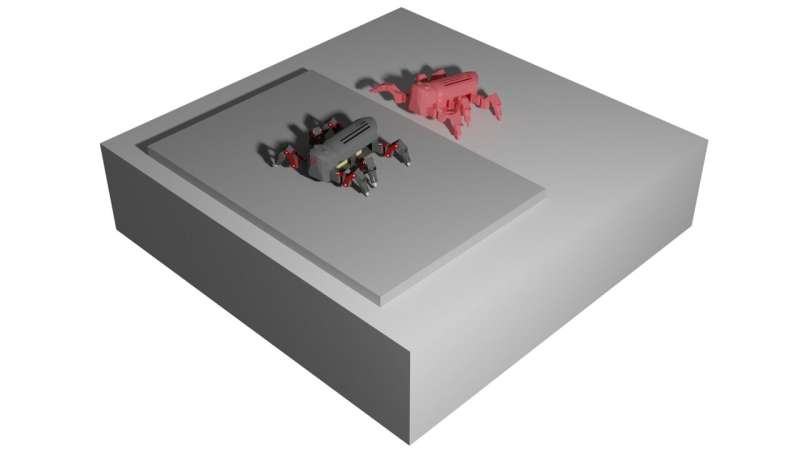

As part of their study, the researchers evaluated their approach on a real physical robot with six legs, called MORF. In their tests, they found that it achieved remarkable results, producing the locomotion behaviors they were aiming for after very short training times.

The new approach is also highly flexible and adaptable, as it allows developers to easily add new behavior-specific modules, producing increasingly complex locomotion behaviors. In the future, it could be used by roboticists and computer scientists worldwide to rapidly train a wide range of legged robots to navigate their surroundings in new and effective ways.

"When using our approach, locomotion controllers do not need to be complex or train for many hours or days," Thor added. "On the contrary, complex locomotion can emerge from many simple modules acting in parallel. Since the controller can learn new behaviors in less than 30 minutes, we want to learn the locomotion behaviors directly on a real-world legged robot instead of a simulated one."

More information: Mathias Thor et al, Versatile modular neural locomotion control with fast learning, Nature Machine Intelligence (2022). DOI: 10.1038/s42256-022-00444-0

P. MORF—modular robot framework. Proceedings of the 2nd International Youth Conference of Bionic Engineering (2018). p. 21–23.

© 2022 Science X Network